mindlap

A conversational AI app to build Voice Agents from conversations.

Project Description

Mindlap is a collaborative, AI-native product built for modern software teams that use tools like Codex, and Claude. As coding velocity increased, teams struggled to maintain shared understanding across specs, architecture, and execution.

Core

Product Lead | Founding Designer

Website

Tags

Research | Product Design | GTM

My role

Product Designer | Researcher | User Testing | GTM

Context

Modern software teams increasingly rely on AI tools to accelerate coding. While this improves output velocity, it also fragments project context. Requirements, architectural intent, and decisions are scattered across Jira, GitHub, Slack, and static documents that rarely evolve with the code.

As a result, teams spend significant time rebuilding context rather than executing on it. Documentation becomes outdated, specifications are skipped under pressure, and AI agents operate without a shared understanding of the system they are modifying.

Mindlap was designed to address this structural gap.

Research & Insights

I led qualitative research with engineering managers and developers through interviews, workflow mapping, and artifact analysis. The goal was to understand where alignment breaks down once AI enters the development process.

Key Insights

Teams do not fail at execution; they fail at context continuity

Documentation loses trust when it does not evolve alongside code

AI tools work best when they operate within a well-defined system boundary

Decision-making is continuous, but decision records are not

These insights suggested the need for a system that actively binds intent, execution, and outcomes over time.

These insights revealed the need for a system that doesn’t just store information, but actively binds intent, decisions, and execution over time.

These insights led directly to the concept of Laps - structured loops that bind intent, execution, and reflection.

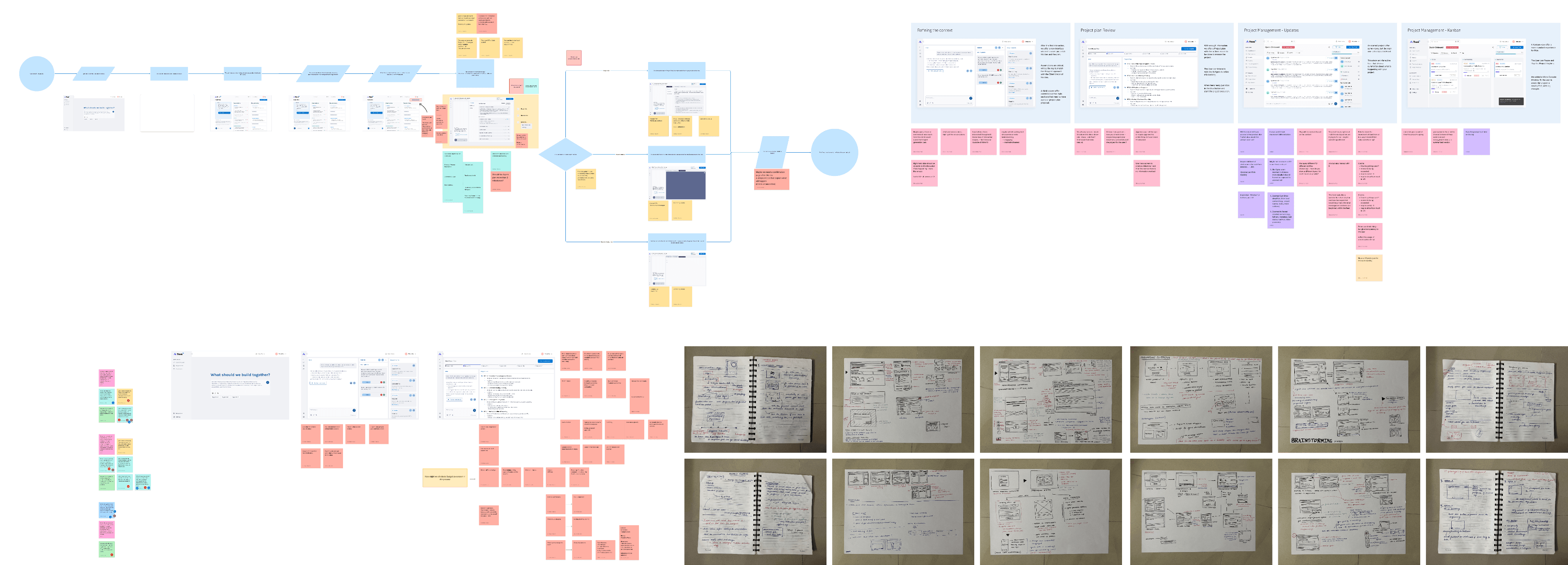

User Flow

This flow maps how teams move from intent to execution within Mindlap.

Rather than optimizing for linear task completion, the flow is designed to preserve context across specification, execution, review, and updates, ensuring that decisions remain traceable and shared as the system evolves.

The Solution - From fragmented cycles to structured execution cycles

Human–AI-in-the-loop by design

mindlap is built around a human and AI-in-the-loop model, where AI agents accelerate execution while humans retain authorship, judgment, and accountability.

Decisions are not automated away, they are surfaced, reviewed, and fed back into the system as evolving context.

Why spec-driven development matters in AI-native workflows

As AI accelerates code generation, specifications become the primary interface between intent and execution.

MindLap treats specs not as documentation, but as executable infrastructure continuously synchronized with code, decisions, and outcomes across each Lap.

Ongoing work & future directions

Ongoing work focuses on go-to-market learning, deeper MCP integrations, and improving how agents collaborate across specs, reviews, and execution.

The long-term goal is to make spec-driven development the primary interface for AI-native teams so intent, context, and execution evolve together.

Core product Screens

Define intent

Execute with context-aware AI agents

Review outcomes and decisions

Update shared context automatically

Sketches/Iterations

Mindlap is an evolving platform.

Current work focuses on:

Go-to-market learning and real-world adoption

Deeper MCP and connector integrations (CLI)

Improving how AI agents collaborate across specs, reviews, and execution

The long-term goal is to work on making spec-driven development the primary interface for AI-native teams, ensuring that intent, context, and execution evolve together.

"Ideas lose momentum when tools get in the way. Voice makes building feel like brainstorming."

© 2025 ShraddhaPatil. All rights reserved